High Bandwidth Memory Market Size and Share

High Bandwidth Memory Market Analysis by Mordor Intelligence

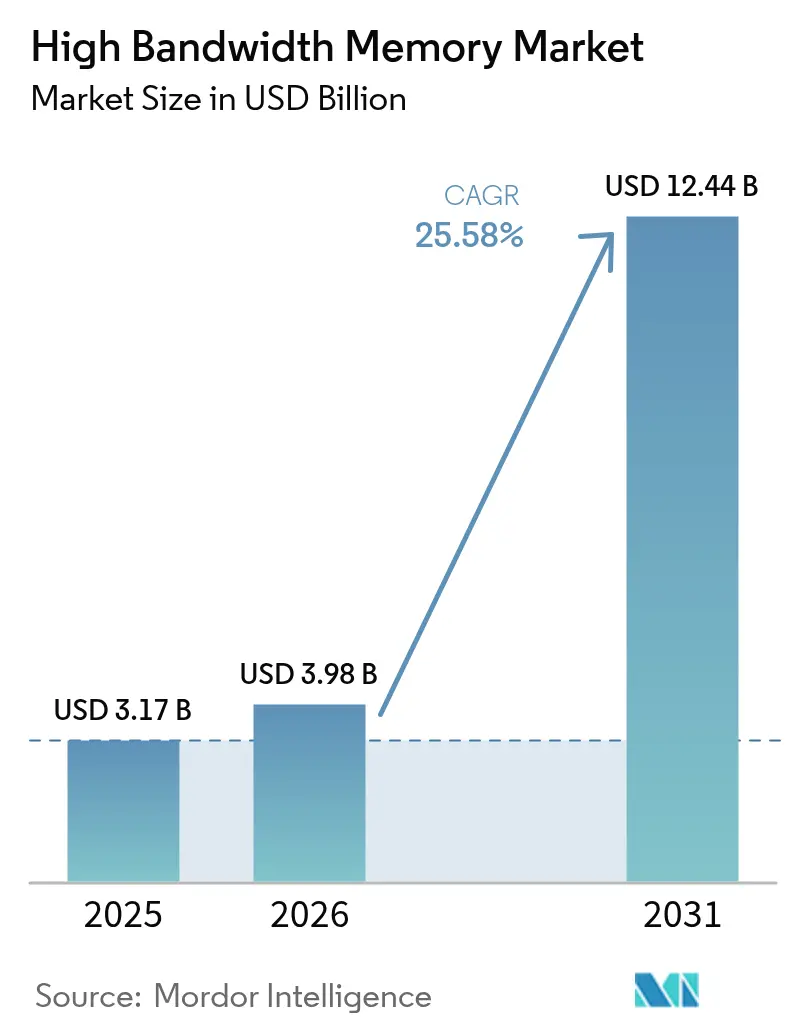

The high bandwidth memory market size is expected to grow from USD 3.17 billion in 2025 to USD 3.98 billion in 2026 and is forecast to reach USD 12.44 billion by 2031 at 25.58% CAGR over 2026-2031. Sustained demand for AI-optimized servers, wider DDR5 adoption, and aggressive hyperscaler spending continued to accelerate capacity expansions across the semiconductor value chain in 2025. Over the past year, suppliers concentrated on TSV yield improvement, while packaging partners invested in new CoWoS lines to ease substrate shortages. Automakers deepened engagements with memory vendors to secure ISO 26262-qualified HBM for Level 3 and Level 4 autonomous platforms. Asia-Pacific’s fabrication ecosystem retained production leadership after Korean manufacturers committed multibillion-dollar outlays aimed at next-generation HBM4E ramps.

Key Report Takeaways

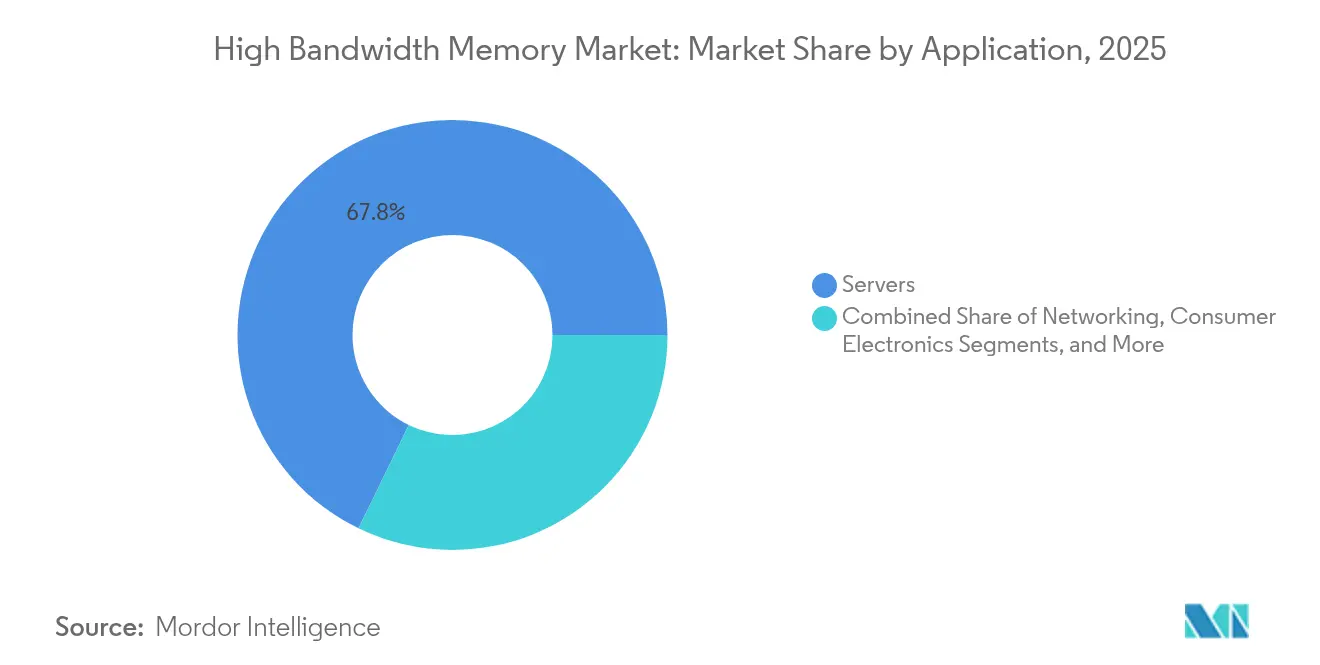

- By application, servers led with 67.80% revenue share in 2025, while automotive and transportation are projected to expand at a 34.18% CAGR through 2031.

- By technology, HBM3 captured 45.70% of 2025 revenue; HBM3E is advancing at a 40.90% CAGR to 2031.

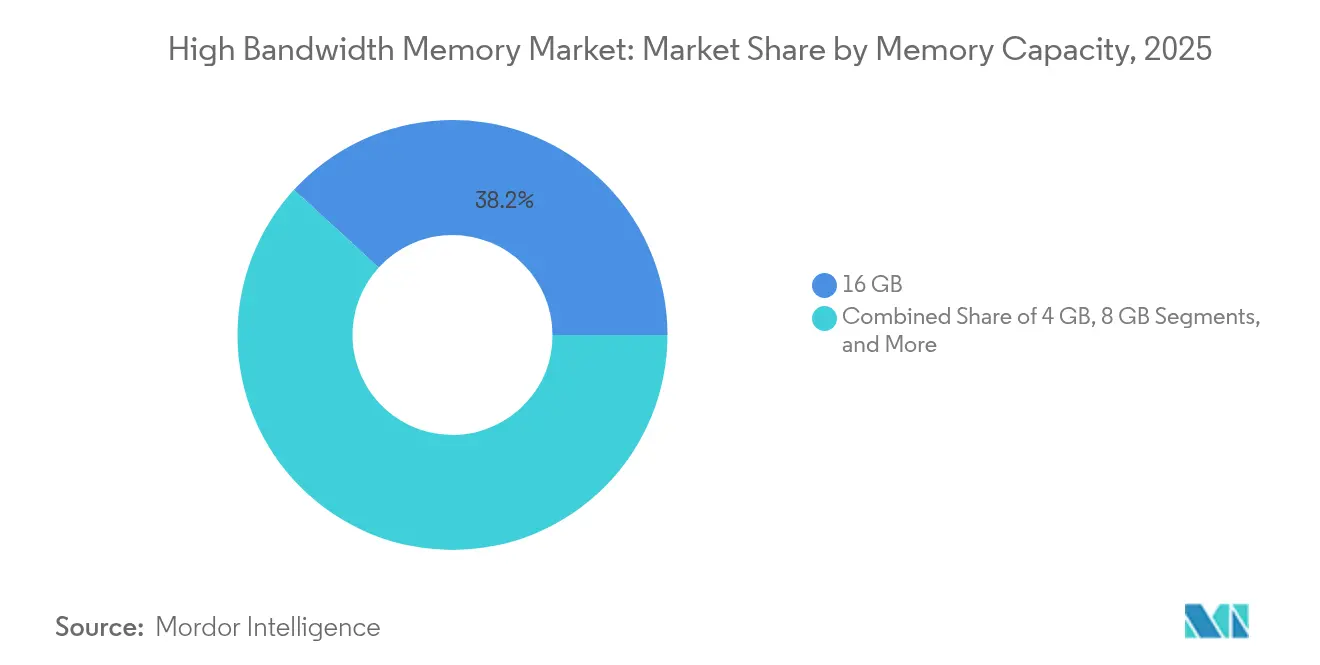

- By memory capacity per stack, 16 GB commanded 38.20% of the high bandwidth memory market size in 2025; 32 GB and above is forecast to register a 36.40% CAGR.

- By processor interface, GPUs accounted for 63.60% market share in 2025, whereas AI accelerators/ASICs show a 32.00% projected CAGR.

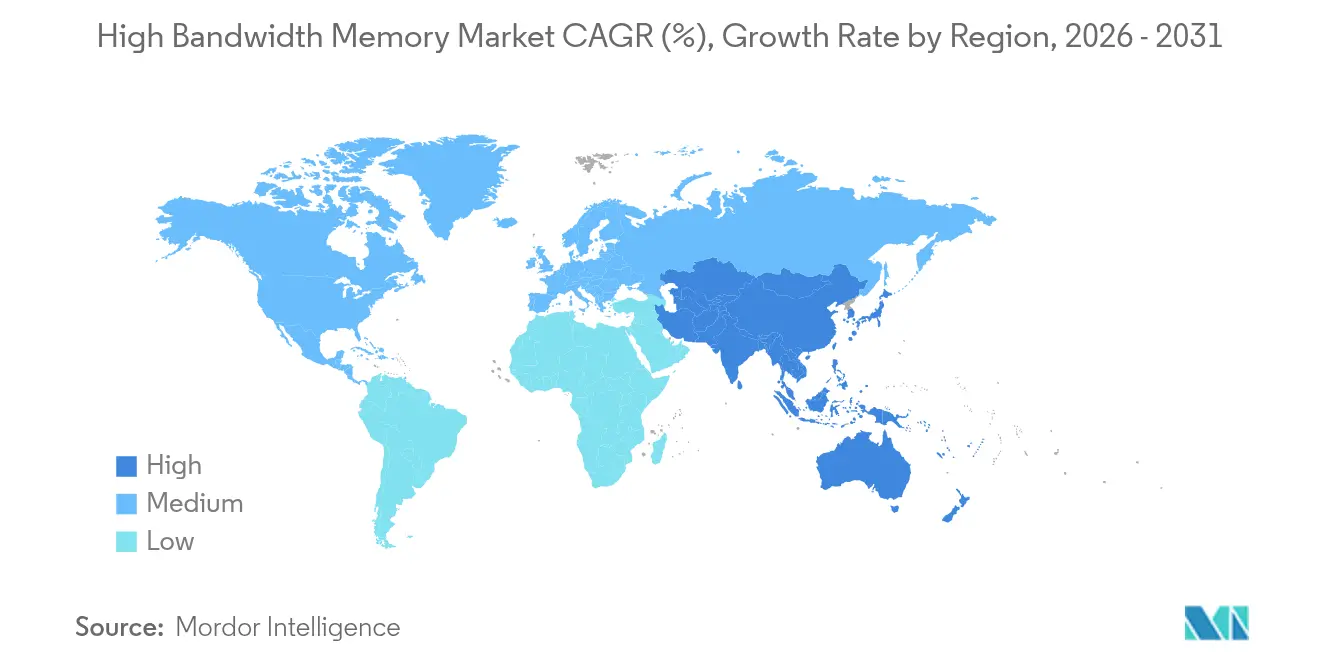

- By geography, Asia-Pacific held 41.00% revenue share in 2025 and is predicted to grow at a 28.80% CAGR through 2031.

Note: Market size and forecast figures in this report are generated using Mordor Intelligence’s proprietary estimation framework, updated with the latest available data and insights as of 2026.

Global High Bandwidth Memory Market Trends and Insights

Drivers Impact Analysis*

| Driver | (~) % Impact on CAGR Forecast | Geographic Relevance | Impact Timeline |

|---|---|---|---|

| AI-server proliferation and GPU attach rates | +8.5% | Global, concentrated in North America and Asia-Pacific | Medium term (2-4 years) |

| Data-center shift to DDR5 and 2.5-D packaging | +6.2% | Global, led by hyperscaler regions | Medium term (2-4 years) |

| Edge-AI inference in automotive ADAS | +4.8% | Europe, North America, and China are automotive hubs | Long term (≥ 4 years) |

| Hyperscaler preference for silicon interposer stacks | +3.7% | North America, Asia-Pacific data center regions | Short term (≤ 2 years) |

| Localized memory production subsidies | +2.1% | US, South Korea, Japan | Long term (≥ 4 years) |

| Photonics-ready HBM road-maps | +1.1% | Global, early adoption in research centers | Long term (≥ 4 years) |

| Source: Mordor Intelligence | |||

AI-Server Proliferation and GPU Attach Rates

Rapid growth in large-scale language models drove a seven-fold rise in HBM per GPU requirements compared with traditional HPC devices during 2024. NVIDIA’s H100 combined 80 GB of HBM3, delivering 3.35 TB/s, while the H200 was sampled in early 2025 with 141 GB of HBM3E at 4.8 TB/s.[1]NVIDIA, “GPU Memory Essentials for AI Performance,” nvidia.com Order backlogs locked in the majority of supplier capacity through 2026, forcing data-center operators to pre-purchase inventory and co-invest in packaging lines.

Data-Center Shift to DDR5 and 2.5-D Packaging

Hyperscalers moved workloads from DDR4 to DDR5 to obtain 50% better performance per watt, simultaneously adopting 2.5-D integration that links AI accelerators to stacked memory on silicon interposers. Dependence on a single packaging platform heightened supply-chain risk when substrate shortages delayed GPU launches throughout 2024.

Edge-AI Inference in Automotive ADAS

Autonomous vehicles that reach Level 4 capability process sensor streams above 1 TB/s, pushing the automotive tier toward HBM4 samples qualified under ISO 26262. Memory vendors introduced safety-oriented designs that include built-in ECC and enhanced thermal monitoring to meet functional-safety mandates.

Hyperscaler Preference for Silicon Interposer Stacks

Custom AI chips from AWS, Google, and Microsoft integrated multiple HBM stacks through TSMC’s CoWoS, achieving interconnect densities above 10,000 connections/mm². Vendors reacted to capacity shortages by funding dedicated interposer lines and co-developing chiplet architectures that shrink interposer footprints.

Restraints Impact Analysis*

| Restraint | (~) % Impact on CAGR Forecast | Geographic Relevance | Impact Timeline |

|---|---|---|---|

| TSV yield losses above 12-layer stacks | -4.2% | Global, concentrated in advanced fabs | Medium term (2-4 years) |

| Limited CoWoS/SoIC advanced-packaging capacity | -3.8% | Asia-Pacific, affecting global supply | Short term (≤ 2 years) |

| Thermal throttling in >1 TB/s bandwidth devices | -2.1% | Global, particularly in data centers | Medium term (2-4 years) |

| Geo-political export controls on AI accelerators | -1.9% | China, with spillover effects globally | Long term (≥ 4 years) |

| Source: Mordor Intelligence | |||

TSV Yield Losses Above 12-Layer Stacks

Yield fell below 70% on 16-high HBM stacks because thermal cycling induced copper-migration failures within TSVs. Manufacturers pursued thermal through-silicon via designs and novel dielectric materials to stabilize reliability, but commercialization remains two years away.

Limited CoWoS/SoIC Advanced-Packaging Capacity

CoWoS lines averaged 95% utilization in 2024; substrate makers struggled to supply T-Glass at sufficient volumes, forcing allocation to top customers and delaying emerging AI accelerator programs. Alternative SoIC flows from Samsung and EMIB from Intel entered early ramp, yet could not offset near-term shortages.

*Our updated forecasts treat driver/restraint impacts as directional, not additive. The revised impact forecasts reflect baseline growth, mix effects, and variable interactions.

Segment Analysis

By Application: Servers Drive Infrastructure Transformation

The server category led the high bandwidth memory market with a 67.80% revenue share in 2025, reflecting hyperscale operators’ pivot to AI servers that each integrate eight to twelve HBM stacks. Demand accelerated after cloud providers launched foundation-model services that rely on per-GPU bandwidth above 3 TB/s. Energy efficiency targets in 2025 favored stacked DRAM because it delivered superior performance-per-watt over discrete solutions, enabling data-center operators to stay within power envelopes. An enterprise refresh cycle began as companies replaced DDR4-based nodes with HBM-enabled accelerators, extending purchasing commitments into 2027.

The automotive and transportation segment, while smaller today, recorded the fastest growth with a projected 34.18% CAGR through 2031. Chipmakers collaborated with Tier 1 suppliers to embed functional-safety features that meet ASIL D requirements. Level 3 production programs in Europe and North America entered limited rollout in late 2024, each vehicle using memory bandwidth previously reserved for data-center inference clusters. As over-the-air update strategies matured, vehicle manufacturers began treating cars as edge servers, further sustaining HBM attach rates.

By Technology: HBM3 Leadership Faces HBM3E Disruption

HBM3 accounted for 45.70% revenue in 2025 after widespread adoption in AI training GPUs. Sampling of HBM3E started in Q1 2024, and first-wave production ran at pin speeds above 9.2 Gb/s. Performance gains reached 1.2 TB/s per stack, reducing the number of stacks needed for the target bandwidth and lowering package thermal density.

HBM3E’s 40.90% forecast CAGR is underpinned by Micron’s 36 GB, 12-high product that entered volume production in mid-2025, targeting accelerators with model sizes up to 520 billion parameters. Looking forward, the HBM4 standard published in April 2025 doubles channels per stack and raises aggregate throughput to 2 TB/s, setting the stage for multi-petaflop AI processors.

By Memory Capacity: 16 GB Mainstream Yields to 32 GB Expansion

The 16 GB tier represented 38.20% of the high bandwidth memory market share during 2025, balancing yield and capacity for mainstream LLM training nodes. Suppliers relied on mature 8-high stack configurations that shipped at high yields, supporting aggressive cost targets.

Demand for larger models spurred a swift pivot toward 32 GB and 36 GB offerings, driving a 36.40% CAGR expectation for 32 GB-plus devices to 2031. Micron’s 36 GB, 12-high HBM3E widened capacity without exceeding 12-layer TSV risk thresholds. Upcoming 24-high HBM4E roadmaps target 64 GB per stack, although vendors continued to refine embedded cooling to offset thermal density.

By Processor Interface: GPU Dominance Challenged by AI Accelerators

GPUs consumed 63.60% of 2025 shipments as NVIDIA’s H100 and H200 lines dominated AI training clusters. Peak utilization rates forced cloud operators to reserve future wafer outputs well into 2026.

Custom AI accelerators showed a 32.00% projected CAGR to 2031 as hyperscalers shifted toward internally designed chips optimized for proprietary workloads. These ASICs often integrate high bandwidth memory directly on-package, eliminating off-chip latency. FPGA-based cards retained a niche position in network function virtualization and low-latency trading, leveraging HBM to sustain throughput without sacrificing reconfigurability.

Geography Analysis

Asia-Pacific accounted for 41.00% of 2025 revenue, anchored by South Korea, where SK Hynix and Samsung controlled more than 80% of production lines. Government incentives announced in 2024 supported an expanded fabrication cluster scheduled to open in 2027. Taiwan’s TSMC maintained a packaging monopoly for leading-edge CoWoS, tying memory availability to local substrate supply and creating a regional concentration risk.

North America’s share grew as Micron secured USD 6.1 billion in CHIPS Act funding to build advanced DRAM fabs in New York and Idaho, with pilot HBM runs expected in early 2026. Hyperscaler capital expenditures continued to drive local demand, although most wafers were still processed in Asia before final module assembly in the United States.

Europe entered the market through automotive demand; German OEMs qualified HBM for Level 3 driver-assist systems shipping in late 2024. The EU’s semiconductor strategy remained R&D-centric, favoring photonic interconnect and neuromorphic research that could unlock future high bandwidth memory market expansion. Middle East and Africa stayed in an early adoption phase, yet sovereign AI datacenter projects initiated in 2025 suggested a coming uptick in regional demand.

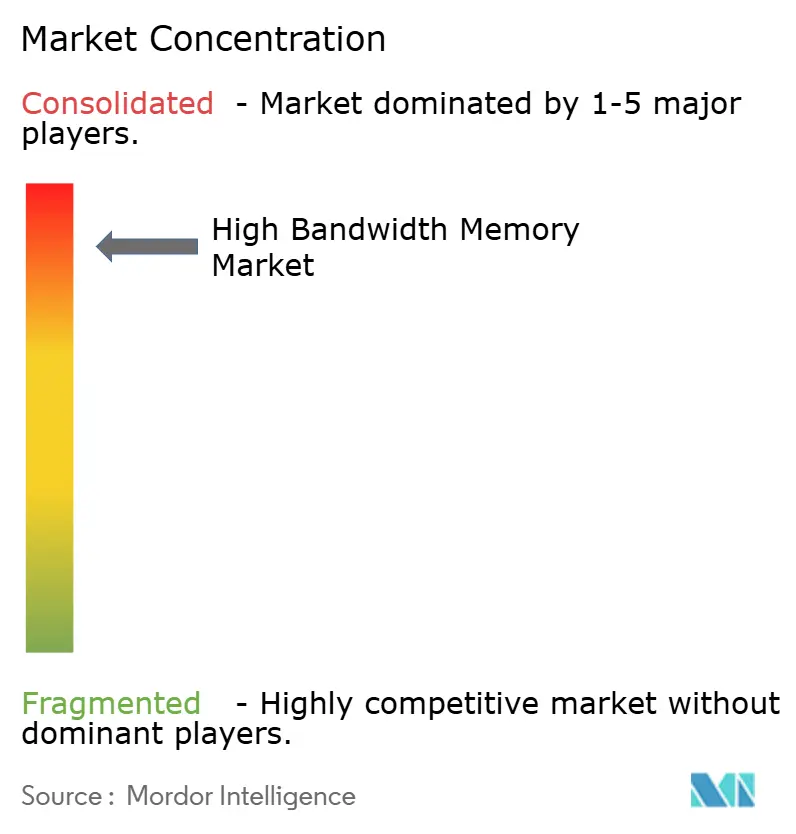

Competitive Landscape

The high bandwidth memory market displayed oligopolistic characteristics because SK Hynix, Samsung, and Micron collectively supplied more than 95% of global output. SK Hynix held leadership thanks to early TSV investment and sole-source contracts with NVIDIA for HBM3E. Samsung narrowed the gap after resolving 2024 yield issues and launching a dual-site HBM4 line at Pyeongtaek in mid-2025. Micron accelerated share gains by pairing its 36 GB HBM3E with AMD’s MI350 GPU, providing an attractive alternative for open AI hardware ecosystems.

Competition shifted from core cell technology toward advanced packaging alliances. SK Hynix and TSMC announced a co-production model that couples N3 logic with HBM4 stacks under a single procurement cycle, locking in customers through 2028.[4]SK Hynix, “SK Hynix Partners With TSMC to Strengthen HBM Leadership,” skhynix.com Suppliers also targeted differentiated niches such as automotive-qualified HBM variants that incorporate extended temperature ranges and real-time diagnostics. Chinese entrants continued to develop domestic HBM2E and HBM3 capabilities; however, export controls limited equipment access, keeping their offerings one to two generations behind.

The push toward application-specific memory catalyzed a service-oriented engagement model where vendors tune speed bins, channel counts, and ECC schemes to individual workloads. This customization strategy built switching costs that favored incumbent suppliers and reinforced market concentration through 2030.

High Bandwidth Memory Industry Leaders

Micron Technology, Inc.

Samsung Electronics Co. Ltd.

SK Hynix Inc.

Intel Corporation

Fujitsu Limited

- *Disclaimer: Major Players sorted in no particular order

Recent Industry Developments

- January 2025: Micron integrated its HBM3E 36 GB memory into AMD’s Instinct MI350 GPUs, delivering up to 8 TB/s bandwidth.

- December 2024: JEDEC released the JESD270-4 HBM4 standard, enabling 2 TB/s throughput and 64 GB configurations.

- November 2025: SK Hynix and TSMC expanded joint HBM4 development to speed volume availability for 3 nm AI accelerators.

- July 2025: SK Hynix confirmed construction of a USD 6.8 billion memory fab in Yongin targeting HBM production.

Global High Bandwidth Memory Market Report Scope

High bandwidth memory (HBM) is the high-speed computer memory interface for 3D-stacked synchronous dynamic random-access memory (SDRAM). It works with high-performance network hardware, high-performance data center AI ASICs, FPGAs, and supercomputers.

The high bandwidth memory (HBM) market is segmented by application (servers, networking, consumer, and automotive and other applications) and geography (North America [United States and Canada], Europe [Germany, France, United Kingdom, and Rest of Europe], Asia-Pacific [India, China, Japan, and Rest of Asia-Pacific], and Rest of the World).

The market sizes and forecasts are provided in terms of value (USD) for all the above segments.

| Servers |

| Networking |

| High-Performance Computing |

| Consumer Electronics |

| Automotive and Transportation |

| HBM2 |

| HBM2E |

| HBM3 |

| HBM3E |

| HBM4 |

| 4 GB |

| 8 GB |

| 16 GB |

| 24 GB |

| 32 GB and Above |

| GPU |

| CPU |

| AI Accelerator / ASIC |

| FPGA |

| Others |

| North America | United States | |

| Canada | ||

| Mexico | ||

| South America | Brazil | |

| Rest of South America | ||

| Europe | Germany | |

| France | ||

| United Kingdom | ||

| Rest of Europe | ||

| Asia-Pacific | China | |

| Japan | ||

| India | ||

| South Korea | ||

| Rest of Asia-Pacific | ||

| Middle East and Africa | Middle East | Saudi Arabia |

| United Arab Emirates | ||

| Turkey | ||

| Rest of Middle East | ||

| Africa | South Africa | |

| Rest of Africa | ||

| By Application | Servers | ||

| Networking | |||

| High-Performance Computing | |||

| Consumer Electronics | |||

| Automotive and Transportation | |||

| By Technology | HBM2 | ||

| HBM2E | |||

| HBM3 | |||

| HBM3E | |||

| HBM4 | |||

| By Memory Capacity per Stack | 4 GB | ||

| 8 GB | |||

| 16 GB | |||

| 24 GB | |||

| 32 GB and Above | |||

| By Processor Interface | GPU | ||

| CPU | |||

| AI Accelerator / ASIC | |||

| FPGA | |||

| Others | |||

| By Geography | North America | United States | |

| Canada | |||

| Mexico | |||

| South America | Brazil | ||

| Rest of South America | |||

| Europe | Germany | ||

| France | |||

| United Kingdom | |||

| Rest of Europe | |||

| Asia-Pacific | China | ||

| Japan | |||

| India | |||

| South Korea | |||

| Rest of Asia-Pacific | |||

| Middle East and Africa | Middle East | Saudi Arabia | |

| United Arab Emirates | |||

| Turkey | |||

| Rest of Middle East | |||

| Africa | South Africa | ||

| Rest of Africa | |||

Key Questions Answered in the Report

What is the current size of the high bandwidth memory market?

The high bandwidth memory market was valued at USD 3.98 billion in 2026 and is forecast to reach USD 12.44 billion by 2031.

Which application segment leads in spending?

Servers contributed 67.80% of 2025 revenue as hyperscalers adopted AI-centric architectures.

Why is HBM3E gaining share?

HBM3E delivers up to 1.2 TB/s per stack and reduces power draw, making it the preferred option for next-generation GPUs and AI accelerators.

How are automakers using HBM?

Automotive OEMs are transitioning to ISO 26262-qualified HBM4 to meet the memory bandwidth demands of Level 3 and Level 4 autonomous driving.

Which region manufactures the most high-bandwidth memory?

Asia-Pacific leads with over 41.00% revenue share and houses the majority of fabrication and advanced-packaging capacity.

Page last updated on: