VRAM Market Size and Share

VRAM Market Analysis by Mordor Intelligence

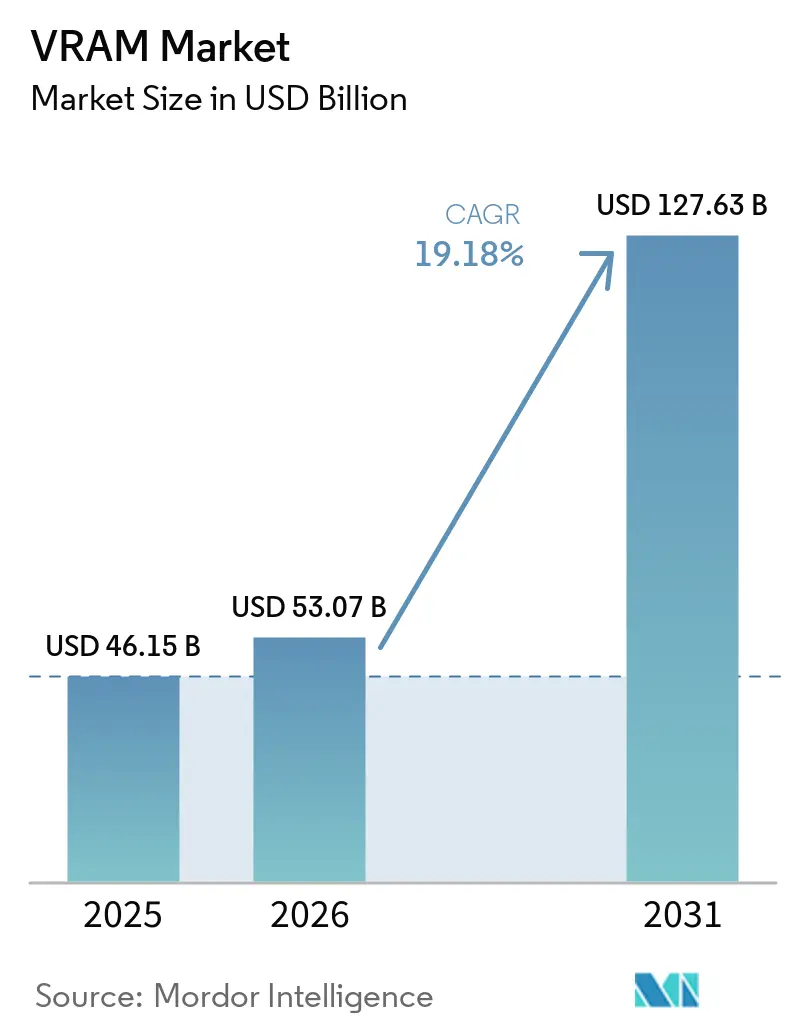

The VRAM market size was valued at USD 46.15 billion in 2025 and is estimated to grow from USD 53.07 billion in 2026 to reach USD 127.63 billion by 2031, at a CAGR of 19.18% during the forecast period (2026-2031). Growing AI training clusters are pushing high-bandwidth-memory costs to almost half of every flagship GPU bill, tilting the balance of power toward memory suppliers. With only three companies able to ship HBM in volume, long lead-times lock buyers into multiyear commitments, sustaining premium pricing even as broader DRAM cycles soften. Intensifying 4K/8K gaming adoption keeps GDDR demand elevated, but the fastest incremental revenue is shifting to data-center accelerators that require 192-432 GB HBM footprints. Asia-Pacific remains the manufacturing hub for both wafers and advanced packaging, while North American hyperscalers lead consumption through record-size AI build-outs, driving the VRAM market toward structurally higher margins.

Key Report Takeaways

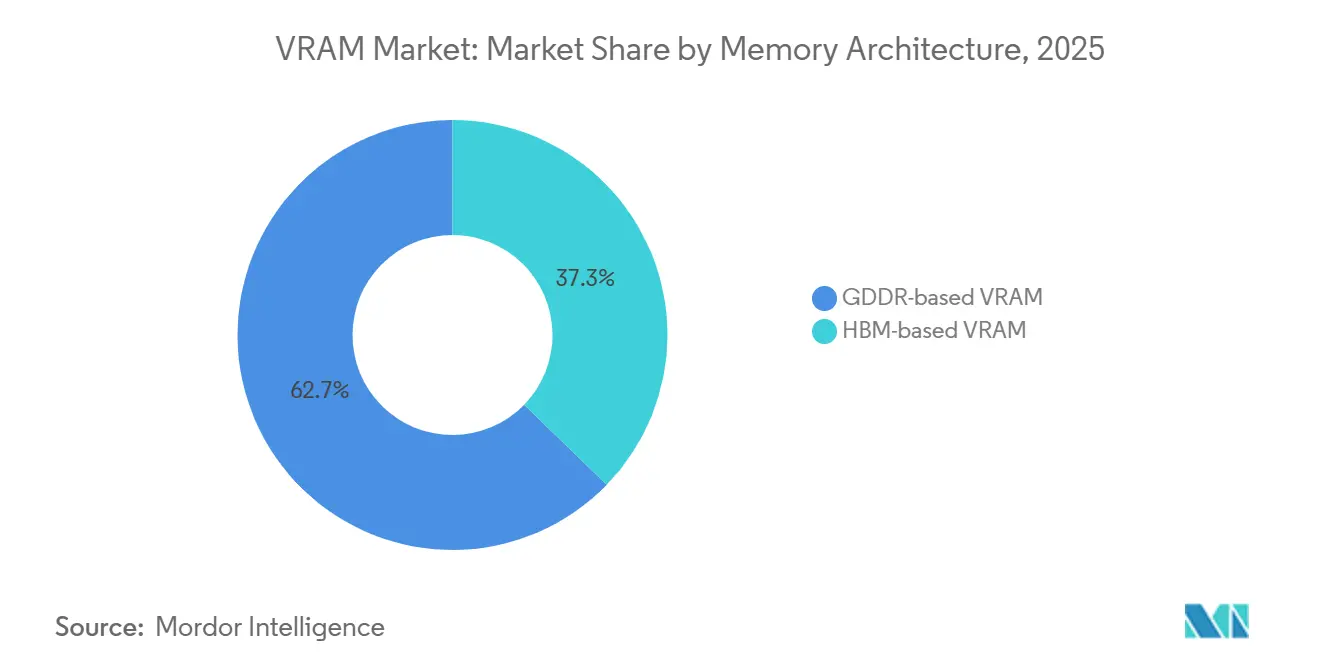

- By memory architecture, GDDR-based devices led with 62.73% of the VRAM market share in 2025, while HBM-based devices are projected to expand at a 19.38% CAGR through 2031.

- By VRAM capacity, the 8-16 GB tier captured 39.31% of the video random access memory market in 2025, and the greater-than-64 GB tier is forecast to grow at a 19.58% CAGR through 2031.

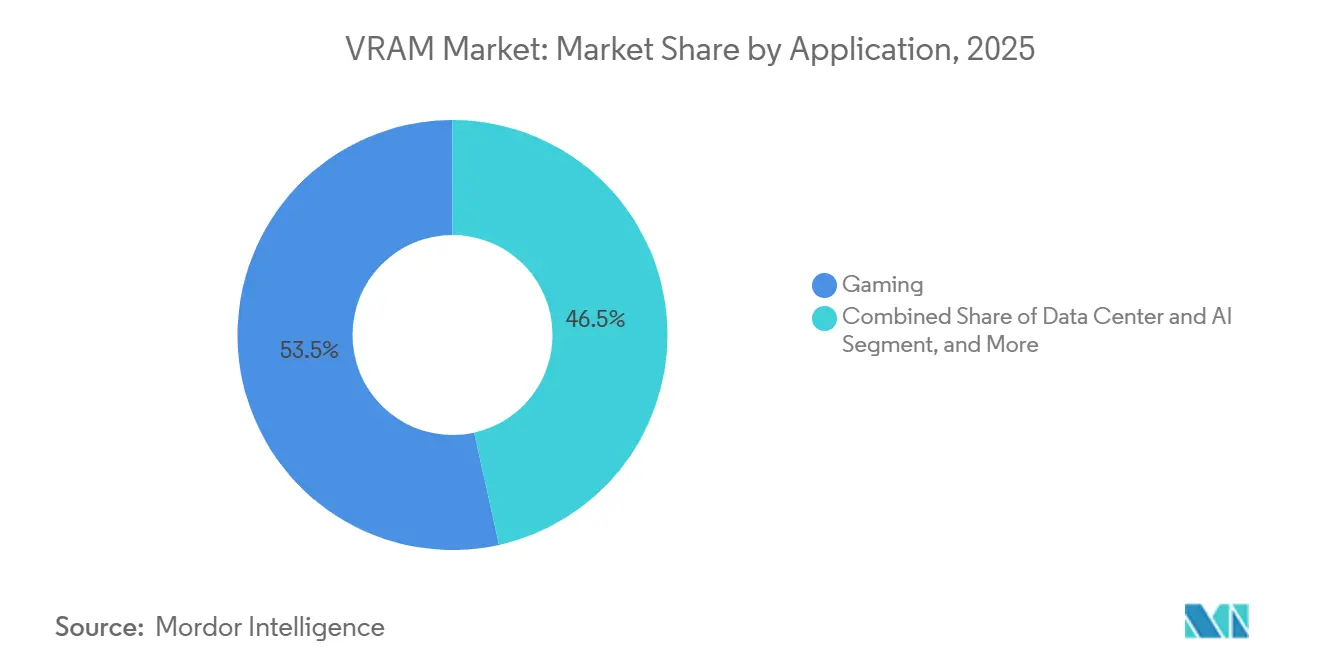

- By application, gaming commanded 53.47% share of the VRAM market size in 2025, whereas data center and AI workloads are projected to post the fastest growth at a 20.18% CAGR over 2026-2031.

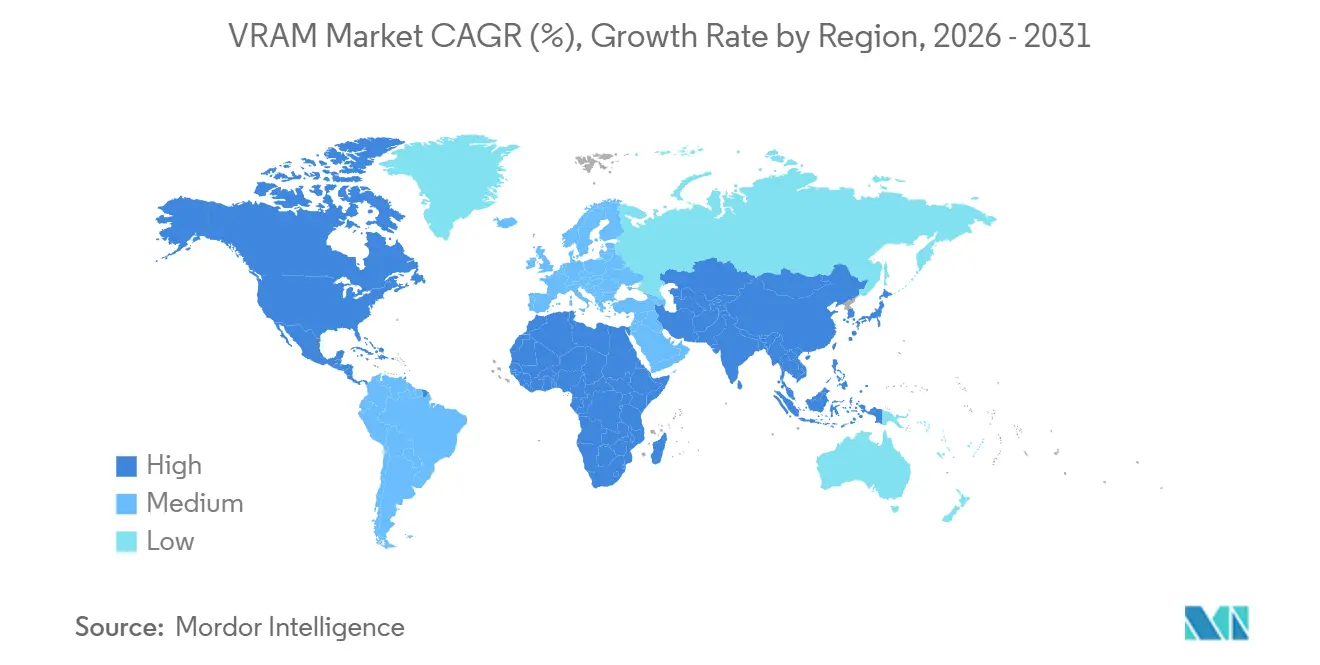

- By geography, Asia-Pacific accounted for 67.17% of the VRAM market share in 2025 and is anticipated to grow at a 20.14% CAGR during the outlook period.

Note: Market size and forecast figures in this report are generated using Mordor Intelligence’s proprietary estimation framework, updated with the latest available data and insights as of January 2026.

Global VRAM Market Trends and Insights

Driver Impact Analysis

| Driver | (~) % Impact on CAGR Forecast | Geographic Relevance | Impact Timeline |

|---|---|---|---|

| Explosive AI Training Workloads in Hyperscale Data Centers | +6.2% | Global, especially North America and Asia-Pacific | Medium term (2-4 years) |

| Mainstream Adoption of 4K/8K Gaming and Ray-Tracing GPUs | +3.8% | Global, led by North America, Europe, affluent Asia-Pacific | Short term (≤ 2 years) |

| Transition to GDDR7 and HBM3E Memory Standards | +4.1% | Global, fabrication in Asia-Pacific, design in North America | Medium term (2-4 years) |

| Edge AI Growth in Automotive ADAS and Industrial Robots | +2.3% | Europe (automotive) and Asia-Pacific (industrial) | Long term (≥ 4 years) |

| Government CHIPS Incentives Accelerating Domestic Memory Fabs | +1.9% | United States, European Union, Japan, South Korea | Long term (≥ 4 years) |

| On-Package Compute-in-Memory Architectures Reducing Latency | +1.5% | Global early adopters in hyperscale AI and mobile edge | Long term (≥ 4 years) |

| Source: Mordor Intelligence | |||

Explosive AI Training Workloads in Hyperscale Data Centers

Rising complexity in large language models is forcing every flagship accelerator to integrate ever-larger HBM stacks. A single high-end GPU now carries memory that costs more than its logic die, and multi-year capacity reservations by cloud providers have removed spot-market flexibility. The resulting demand shock gives memory makers durable pricing power and decouples the VRAM market from historic DRAM boom-bust swings. Capital spending on AI-class data centers, therefore, reinforces long-run revenue visibility for HBM producers.

Mainstream Adoption of 4K/8K Gaming and Ray-Tracing GPUs

Consumer GPUs must now buffer ultra-high-resolution textures and ray-tracing acceleration data structures, lifting baseline card capacities to 16 GB and above. Mid-cycle console refreshes sustain a floor under GDDR shipments, while workstation variants stretch the same technology to 96 GB for real-time engineering workloads. Tight HBM supply is pushing suppliers to prioritize higher-margin stacks, indirectly tightening GDDR availability and supporting firm pricing through late 2027.

Transition to GDDR7 and HBM3E Memory Standards

GDDR7 doubles per-pin throughput relative to early GDDR6, giving board vendors a bridge solution for 8K gaming and entry-level inference without incurring HBM packaging costs. On the stacked-memory side, HBM3E and initial HBM4 volumes are already sampling at more than 11 Gbps, delivering over 3 TB/s per stack. Rapid controller IP availability from third-party vendors compresses product cycles and brings second-source optionality, accelerating mainstream adoption of the new standards.

Edge AI Growth in Automotive ADAS and Industrial Robots

Zonal vehicle architectures and factory robots running vision transformers require mid-capacity VRAM footprints yet face tight power budgets. NVIDIA's TensorRT Edge-LLM framework, optimized for CLIP-based vision models, requires 6-8 GB of VRAM for real-time inference on vehicle gateway processors, driving adoption of 8-16 GB GDDR6 modules in automotive compute platforms.[1]“Optimize Vision-Language Models for Edge AI With NVIDIA TensorRT Edge-LLM,” NVIDIA Developer Blog, developer.nvidia.com Vehicle gateway processors often allocate 6-8 GB per real-time LLM, while fleet-wide telematics hubs can hit 16 GB to process multi-camera feeds simultaneously. Similar dynamics in collaborative robots push discrete GPU design wins, expanding the addressable base for GDDR6 and upcoming LPDDR6 devices.

Restraint Impact Analysis

| Restraint | (~) % Impact on CAGR Forecast | Geographic Relevance | Impact Timeline |

|---|---|---|---|

| Persistent HBM Supply Bottlenecks and Long Fab Lead-Times | -3.7% | Global, most acute in North America and Europe | Medium term (2-4 years) |

| High Cost Differential Between HBM and Conventional GDDR | -2.1% | Global, affects cost-sensitive enterprise and edge buyers | Short term (≤ 2 years) |

| Geopolitical Export Controls on Advanced Memory Technologies | -1.6% | China primary, global secondary routing effects | Medium term (2-4 years) |

| Integrated Graphics Performance Cannibalizing Entry-Level GPUs | -0.9% | Global, consumer and small-business segments | Short term (≤ 2 years) |

| Source: Mordor Intelligence | |||

Persistent HBM Supply Bottlenecks and Long Fab Lead-Times

Advanced packaging capacity for stacked memory remains the most significant bottleneck in the market. Building new facilities to address this issue takes nearly 2 years, followed by an additional 6 months or more for customer qualification processes. This prolonged timeline has resulted in persistent shortages, even as the industry records record levels of capital investment to expand capacity. Furthermore, sub-70% yields observed in the latest HBM3E layers exacerbate production risks. To make matters worse, reallocating wafer starts from GDDR to HBM to meet high-performance demands has further constrained the availability of mid-range GPUs, creating additional challenges for the market.

High Cost Differential Between HBM and Conventional GDDR

HBM continues to maintain a three- to four-times price premium per gigabyte compared to GDDR, significantly limiting its applicability to workloads where high bandwidth directly translates into revenue generation. This cost disparity drives vendors to strategically allocate HBM for top-tier SKUs, where performance demands justify the expense. Meanwhile, high-speed GDDR7 is positioned as the preferred choice for cost-sensitive tiers, ensuring a balance between performance and affordability. This approach, however, restricts the volume scaling of HBM, which could otherwise help reduce the price gap. Consequently, the adoption of HBM remains constrained, particularly in edge devices and smaller enterprise clusters, where cost is a critical factor.

Segment Analysis

By Memory Architecture: HBM Accelerates Value Capture

HBM-based devices accounted for a smaller share of shipments in 2025, yet are forecast to post a leading 19.38% CAGR between 2026 and 2031. This growth trajectory reflects hyperscale AI platforms' prioritization of terabyte-scale bandwidth, which is critical for handling complex workloads and large-scale data processing. These platforms are willing to absorb the higher cost per gigabyte due to the significant performance benefits HBM offers. Samsung began commercial HBM4 shipments in 2026, delivering 3.3 TB/s per stack, significantly widening the performance gap over GDDR alternatives and further solidifying HBM's position in high-performance computing applications.

GDDR technology, while still holding 62.73% market share, remains indispensable for applications such as desktop gaming, professional visualization, and the rapidly growing demand for 8K content creation. The introduction of GDDR7, with its up to 32 Gbps transfer rates, extends the technology’s relevance and ensures its continued adoption in cost-sensitive and performance-driven markets. This diversification within the video random access memory market prevents HBM from monopolizing the segment, maintaining a balance between high-performance and cost-effective solutions.

By VRAM Capacity: Shift Toward Ultra-Large Stacks

The 8-16 GB tier maintained the largest shipment volume in 2025, primarily driven by consumer GPUs that cater to a wide range of applications, including gaming and general-purpose computing. However, configurations exceeding 64 GB are projected to grow the fastest, with a robust CAGR of 19.58% forecast through 2031. This surge is attributed to the increasing demands of AI training workloads, which require significantly higher memory capacities. As AI models grow in complexity, single-accelerator footprints are expected to surpass 192 GB, with industry roadmaps already indicating the introduction of 384 GB devices by 2028.

For configurations below 8 GB, rapid advancements in integrated graphics technology are challenging discrete entry-level graphics cards, as integrated solutions are becoming more capable of handling basic computing and graphical tasks. Meanwhile, 16-32 GB boards continue to see steady growth, driven by their suitability for high-resolution content creation and professional applications. The 16-32 GB tier serves high-end gaming and professional visualization, with NVIDIA's RTX PRO 6000 offering 96 GB of GDDR7 for CAD assemblies, 3D rendering, and real-time simulation that exceed consumer gaming memory footprints but remain cost-sensitive relative to HBM-equipped data-center products.[2]NVIDIA, “RTX PRO 6000,” nvidia.com As a result, the VRAM market segment dedicated to mid-capacity tiers remains stable, even as hyperscale investments in higher-capacity configurations shift the overall market dynamics toward larger memory solutions.

By Application: Data Center AI Now Sets the Pace

Gaming still accounted for 53.47% of the revenue in 2025, driven by a robust console installed base that ensures 16 GB GDDR6 remains a standard in cross-platform development budgets. This segment continues to dominate due to the consistent demand for high-performance GPUs in gaming applications. However, data-center AI workloads are projected to outpace all other segments, growing at a remarkable 20.18% CAGR through 2031. The increasing adoption of AI-driven applications and services in data centers is fueling this growth, with multi-gigawatt cloud rollouts generating petabytes of incremental HBM demand annually to support these workloads.

Professional visualization is also experiencing strong double-digit growth, supported by the rising demand for real-time engineering simulations and media rendering workloads. These applications require high-performance GPUs with substantial VRAM, driving consistent growth in this segment. Additionally, edge AI is gradually scaling as automakers and factory-automation vendors adopt zonal designs that centralize compute and VRAM pools. This shift is enabling more efficient processing and storage solutions, further expanding the GPU market across industries.

Geography Analysis

Asia-Pacific holds a dominant position as the manufacturing backbone of the VRAM supply chain, accounting for 67.17% of the revenue share in 2025. The region benefits from the significant investments made by Korean and Taiwanese foundries, which are allocating over USD 100 billion in 2026 to expand HBM clean-room facilities and advanced packaging capabilities. These efforts are expected to help the region maintain a robust forecasted CAGR of 20.14%. Additionally, the rapid development of AI infrastructure in countries like India and Southeast Asia is driving local consumption, further supporting export-led growth and solidifying the region's leadership in the global VRAM market.

North America, despite having minimal domestic DRAM wafer production, emerges as the largest consumer block when hyperscale imports are included. The CHIPS Act, which provides over USD 15 billion in grants and loans, aims to localize wafer and packaging capacity within the region. However, significant production volumes are not anticipated before 2028. SK hynix secured USD 458 million in grants and up to USD 500 million in loans to establish an advanced packaging facility in Indiana, targeting HBM assembly and test capacity to serve U.S. hyperscalers with reduced lead times.[3]U.S. Department of Commerce, “Biden-Harris Administration Announces Preliminary Terms for SK hynix Awards,” commerce.gov In the meantime, North American cloud providers remain heavily dependent on trans-Pacific supply chains, which are susceptible to disruptions caused by geopolitical tensions. This reliance underscores the importance of diversifying supply sources to mitigate potential risks.

Europe, while lagging in fabrication capabilities, is witnessing a growing demand for VRAM in applications such as automotive advanced driver-assistance systems (ADAS) and industrial robotics. Initiatives like the European Chips Act have allocated funding to establish local pilot production lines, but the absence of full-scale HBM production continues to limit the region's contribution to the global supply chain. Meanwhile, South America and the Middle East and Africa contribute smaller volumes, primarily driven by demand in gaming and entry-level professional workloads. Although these regions exhibit relatively modest growth, they maintain steady progress within the global VRAM market, ensuring their relevance in the broader industry landscape.

Competitive Landscape

Market concentration is highly evident in the stacked memory segment, where SK Hynix, Samsung, and Micron collectively dominate the entire supply base. SK hynix continues to hold the largest market share, primarily due to its long-standing strategic alignments with NVIDIA. However, Samsung surpassed SK hynix in absolute HBM output following an aggressive ramp-up of its HBM4 production in late 2025. Micron, the most recent entrant in this space, aims to secure double-digit market share by 2027. This goal is supported by its joint research and development initiatives with Applied Materials and its early successes with NVIDIA’s H200 product line.

The competitive dynamics in this market are driven more by advancements in packaging yield and the depth of co-design partnerships than by wafer scale alone. For instance, Samsung and AMD formalized their collaboration through a memorandum of understanding in 2026 to strengthen joint qualification processes.[4]Samsung Newsroom, “Samsung and AMD Expand Strategic Collaboration on Next-Generation AI Memory Solutions,” news.samsung.com This move mirrors NVIDIA’s historically close collaboration with SK hynix. Additionally, fabless intellectual property (IP) providers such as Rambus and Cadence are playing a critical role by offering controller designs. These designs enable custom accelerator teams to mitigate risks associated with vendor lock-in, providing them with greater flexibility in their operations.

Innovative packaging alternatives are emerging as potential game-changers, poised to significantly alter market cost structures. Intel’s Embedded Multi-die Interconnect Bridge Technology (EMIB-T) offers a more cost-effective pathway for integrating HBM4, while wider-bus GDDR7 boards present an interim solution for inference accelerators that are unable to afford HBM. Furthermore, the expansion of export controls has forced Chinese manufacturers to rely on legacy DRAM nodes, thereby reinforcing the dominance of incumbent players. This dynamic is expected to sustain a highly consolidated VRAM market throughout the remainder of the decade.

VRAM Industry Leaders

Samsung Electronics Co. Ltd.

Micron Technology Inc.

NVIDIA Corporation

Advanced Micro Devices Inc.

SK Hynix Inc.

- *Disclaimer: Major Players sorted in no particular order

Recent Industry Developments

- April 2026: Broadcom and Meta extended their multiyear partnership through 2029 to co-develop 2-nanometer AI chips, with Meta committing to more than 1 GW of custom silicon deployment.

- March 2026: Applied Materials and Micron launched a USD 5 billion EPIC Center collaboration to accelerate next-generation DRAM, HBM, and NAND innovations.

- March 2026: Applied Materials and SK hynix entered a parallel R&D agreement focused on materials and advanced packaging for AI memory.

- March 2026: Samsung and AMD signed an MoU to co-develop future HBM generations for high-performance computing accelerators.

Global VRAM Market Report Scope

The VRAM (Video Random Access Memory) Market refers to the global industry that designs, produces, and commercializes high-speed memory solutions for graphics processing units (GPUs) and other parallel computing devices. VRAM is a critical component that stores graphical data, textures, frame buffers, and AI model parameters, enabling fast data access and high-throughput performance for graphics rendering and compute-intensive workloads.

The VRAM Market Report is Segmented by Memory Architecture (GDDR-based VRAM, and HBM-based VRAM), VRAM Capacity (≤ 8 GB, 8-16 GB, 16-32 GB, 32-64 GB, and Above 64 GB), Application (Gaming, Data Center and AI, Professional Visualization, and Edge AI and Embedded), and Geography (North America, Europe, Asia-Pacific, South America, and Middle East and Africa). The Market Forecasts are Provided in Terms of Value (USD).

| GDDR-based VRAM |

| HBM-based VRAM |

| ≤ 8 GB |

| 8-16 GB |

| 16-32 GB |

| 3-64 GB |

| Above 64 GB |

| Gaming |

| Data Center and AI |

| Professional Visualization |

| Edge AI and Embedded |

| North America | United States |

| Canada | |

| Mexico | |

| Europe | United Kingdom |

| Germany | |

| France | |

| Italy | |

| Rest of Europe | |

| Asia-Pacific | China |

| Japan | |

| India | |

| South Korea | |

| Southeast Asia | |

| Rest of Asia-Pacific | |

| South America | Brazil |

| Rest of South America | |

| Middle East and Africa |

| By Memory Architecture | GDDR-based VRAM | |

| HBM-based VRAM | ||

| By VRAM Capacity | ≤ 8 GB | |

| 8-16 GB | ||

| 16-32 GB | ||

| 3-64 GB | ||

| Above 64 GB | ||

| By Application | Gaming | |

| Data Center and AI | ||

| Professional Visualization | ||

| Edge AI and Embedded | ||

| By Geography | North America | United States |

| Canada | ||

| Mexico | ||

| Europe | United Kingdom | |

| Germany | ||

| France | ||

| Italy | ||

| Rest of Europe | ||

| Asia-Pacific | China | |

| Japan | ||

| India | ||

| South Korea | ||

| Southeast Asia | ||

| Rest of Asia-Pacific | ||

| South America | Brazil | |

| Rest of South America | ||

| Middle East and Africa | ||

Key Questions Answered in the Report

What is the current VRAM market size and how fast is it growing?

The VRAM market size is USD 53.07 billion in 2026 and is projected to reach USD 127.63 billion by 2031, translating into a 19.18% CAGR.

Which memory technology is growing the fastest within VRAM?

HBM-based VRAM is the fastest-growing category, forecast to expand at a 19.38% CAGR through 2031 as AI accelerators demand terabyte-scale bandwidth.

Why are HBM prices so much higher than GDDR?

HBM uses 3D stacking and advanced packaging that consume more wafer area and require through-silicon vias, adding a three- to four-times cost premium per gigabyte compared with GDDR.

Which region will drive most of the new VRAM capacity?

Asia-Pacific will add the majority of new wafer and packaging capacity, supported by more than USD 100 billion in 2026 capital outlays from Samsung, SK hynix, Micron, and TSMC.

How are export controls affecting Chinese access to high-bandwidth memory?

Expanded U.S. rules bar shipments of HBM2 and newer generations to Chinese AI developers, pushing them toward legacy DRAM and slowing domestic accelerator roadmaps.

What capacity range is becoming standard for flagship AI GPUs?

Leading accelerators now integrate 192-432 GB of HBM per device, and roadmaps indicate single-package capacities could exceed 384 GB by 2028.

Page last updated on: