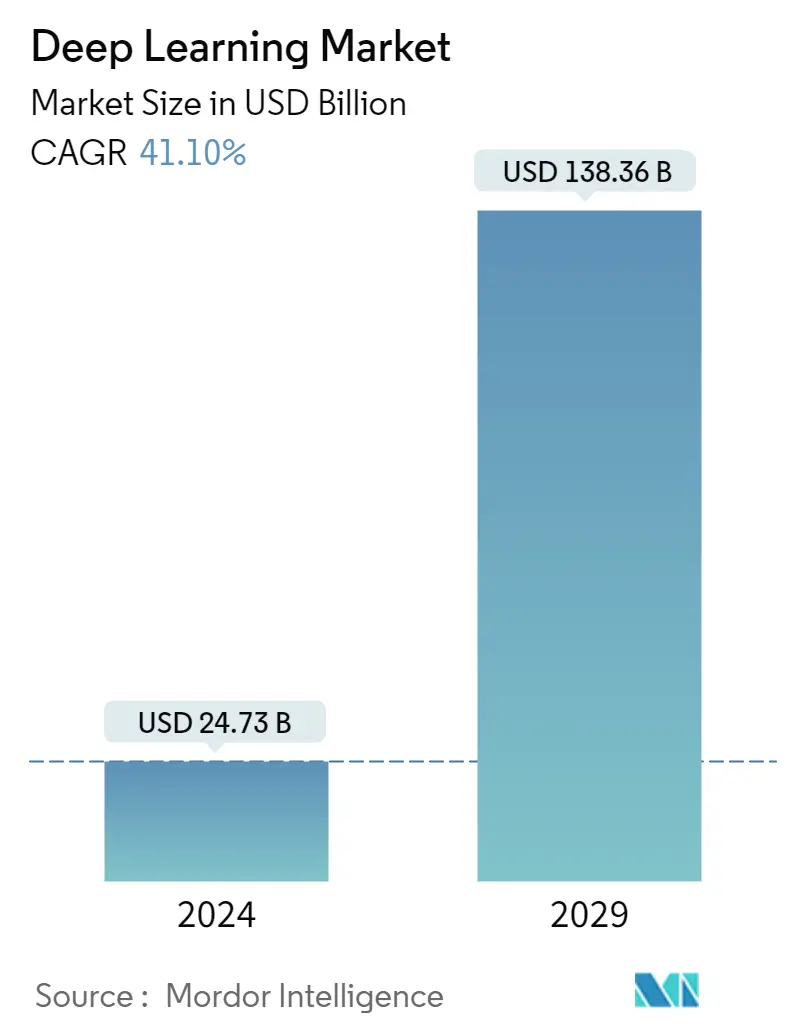

Market Size of Deep Learning Industry

| Study Period | 2019 - 2029 |

| Market Size (2024) | USD 24.73 Billion |

| Market Size (2029) | USD 138.36 Billion |

| CAGR (2024 - 2029) | 41.10 % |

| Fastest Growing Market | Asia Pacific |

| Largest Market | North America |

Major Players

*Disclaimer: Major Players sorted in no particular order |

Need a report that reflects how COVID-19 has impacted this market and its growth?

Deep Learning Market Analysis

The Deep Learning Market size is estimated at USD 24.73 billion in 2024, and is expected to reach USD 138.36 billion by 2029, growing at a CAGR of 41.10% during the forecast period (2024-2029).

Deep learning, a subfield of machine learning (ML), led to breakthroughs in several artificial intelligence tasks, including speech recognition and image recognition. Furthermore, the ability to automate predictive analytics is leading to the hype for ML. Factors such as enhanced support in product development and improvement, process optimization and functional workflows, and sales optimization, among others, have been driving enterprises across industries to invest in deep learning applications. Furthermore, the latest machine-learning approaches have significantly improved the accuracy of models, and new classes of neural networks have been developed for applications like image classification and text translation.

- Technological advances, such as increasing data center capacity, high computing power and the ability to carry out tasks without human input, have attracted significant attention. In addition, the growth of the deep learning industry is fueled by rapidly adopting cloud computing technology across a number of sectors.

- Several developments are now advancing deep learning. According to SAS, improvements in algorithms have boosted the performance of deep learning methods. The increasing amount of data volumes has been supportive of the building of neural networks with several deep layers, including streaming data from the Internet of Things (IoT) and textual data from social media and physicians' notes. A significant amount of computational power is essential to solve deep learning problems, considering the iterative nature of deep learning algorithms-their complexity increases as the number of layers increases. The hardware running deep learning algorithms also needs to support the large volumes of data required to train the networks.

- Computational advances in graphic processing units (GPUs) and distributed cloud computing have put incredible computing power at the users' disposal. This development is led by hardware providers, such as NVIDIA, Intel, and AMD, among others, which have been improving the computational speeds among other features and making them compatible with most-used open-source platforms, such as Tensorflow, Cognitive Toolkit (Microsoft), Chainer, Caffe, and PyTorch, among others. Therefore, 'open-sourcing deep learning capabilities' have become increasingly popular across enterprises. These open-source frameworks enable users to build machine-learning models efficiently and quickly.

- Deep learning has a number of serious limitations that need to be overcome before it can achieve its full potential, such as the black box problem, overpopulation, lack of contextual understanding, data requirements and computational intensity, which might effect market

- As a result, COVID-19 has had an excellent impact for the technology sector. Deep learning algorithms have been employed for assisting diagnosis and detection of COVIDE-19 cases based on clinical images, e.g. chest Xray or CT scans. The growing demand for MRI analysis tools within the healthcare sector which has led to a rise in the depth learning market.